1. What Does AIoT Mean? Definition and Key Capabilities

AIoT, short for Artificial Intelligence of Things, combines the data connectivity of IoT with the intelligence of AI. According to Wikipedia , AIoT integrates AI algorithms with IoT infrastructures so that devices can “analyze data, make decisions, and act” without constant cloud dependence. If you’ve wondered “what is AIoT” or searched for the AIoT meaning, it’s the integration of smart algorithms into connected devices, enabling real-time decisions, automation, and predictive insights. This guide covers AIoT’s definition, differences from AI + IoT, and real-world applications.

AIoT’s Three Core Capabilities

Industry analysts like Gartner highlight that these capabilities are what transform IoT from “data collection networks” into intelligent, context-aware systems.

- Intelligent Data Processing – IoT devices collect data and use AI for real-time analysis and optimization, preventing data overload and inefficient storage.

- Self-Learning & Decision-Making – AI empowers IoT devices with adaptive capabilities, enabling them to make autonomous decisions rather than just executing preset commands.

- Smart Collaboration – AIoT devices interact through multi-device networking, forming distributed intelligent systems to optimize complex tasks, such as traffic signal adjustments in smart cities.

How AIoT Differs from AI + IoT

Unlike simply adding AI in IoT, AIoT emphasizes multi-layer coordination across devices, edge computing, and cloud systems:

- Device Layer: Sensors and smart hardware perform localized AI inference.

- Edge Computing Layer: Aggregates data, performs real-time processing, and updates AI models.

- Cloud Computing Layer: Handles large-scale data training, global management, and distribution.

This approach reduces network latency and bandwidth requirements while enhancing the intelligence of each connected node. AIoT transforms IoT applications from basic monitoring and control to cross-scenario, multi-device collaboration, enhancing industries such as manufacturing, energy, transportation, healthcare, and consumer electronics.

2. AIoT Edge-Cloud Architecture Explained

AIoT operates on an edge-cloud architecture, allowing data processing at different levels rather than relying entirely on cloud computing. As noted by Synaptics, this hybrid design minimizes latency, improves privacy, and enables AI inference closer to the data source.

The following AIoT architecture diagram illustrates the device (edge), edge computing, and cloud computing layers and AI’s role at each stage.

graph TB subgraph "Device Layer (Edge)" A1[Smart Sensors] A2[Industrial Robots] A3[Smart Home Devices] A4[Smart Cameras] A5[Wearable Devices] A6[Drones] end subgraph "Edge Computing Layer" B1[Edge Gateways] B2[Edge Servers] B3[5G MEC(Multi-Access Edge Computing)] B4[Local AI Inference Engine] end subgraph "Cloud Computing Layer" C1[Data Lakes & Distributed Storage] C2[AI Training & Deep Learning] C3[Large-Scale Data Analysis] C4[Security Management & Device Authentication] C5[API & Open Platforms] end subgraph "Application Layer" D1[Smart Manufacturing] D2[Smart Cities] D3[Smart Healthcare] D4[Smart Energy] D5[Autonomous Vehicles] end %% Connectivity A1 -->|Sensor Data| B1 A2 -->|State Monitoring| B2 A3 -->|Local Decision-Making| B4 A4 -->|Image Processing| B4 A5 -->|Physiological Data| B3 A6 -->|Task Execution| B3 B1 -->|Data Aggregation| C1 B2 -->|Real-Time Computation| C2 B3 -->|Low-Latency Inference| C3 B4 -->|Preprocessed Data| C4 C1 -->|Storage & Management| D1 C2 -->|Model Training| D2 C3 -->|Data Analysis| D3 C4 -->|Security Compliance| D4 C5 -->|Industry APIs| D5

Diagram Explanation

- Device Layer (Edge): Responsible for data collection, including industrial sensors, smart home devices, traffic cameras, and wearable health monitors. Some devices have built-in AI processing capabilities (e.g., gesture recognition, anomaly detection).

- Edge Computing Layer: Includes edge servers, smart gateways, and 5G MEC (Multi-Access Edge Computing) for localized AI inference, such as intelligent monitoring or industrial quality inspection, reducing cloud dependency.

- Cloud AIoT Platform: Provides AI training, data storage, and security management, and enables developers to access AIoT capabilities via APIs for tasks such as intelligent scheduling, predictive maintenance, and user behavior analysis.

- Application Layer: The end-user services powered by AIoT, including smart cities, intelligent manufacturing, remote healthcare, autonomous driving, and smart energy management.

This device-edge-cloud coordination transforms AIoT from simple data collection and AI analysis into an intelligent evolutionary system capable of real-time local processing while leveraging cloud optimization.

From traditional cloud AI computing to today’s edge AI + device collaboration, AIoT is revolutionizing various industries. This article will explore AIoT applications in healthcare, agriculture, energy, logistics, and industrial AI, highlighting real-world use cases.

3. Real-World AIoT Applications & Examples

AIoT transforms industries such as manufacturing, energy, transportation, healthcare, and consumer electronics. Reports from McKinsey estimate that AIoT could generate trillions in economic value by enabling autonomous decision-making in connected systems.

3.1 AIoT Technology Innovations in Healthcare AI

Medical Imaging AIoT: Breakthrough in AI Edge Computing

Traditional medical imaging analysis requires doctors to review images manually. AIoT enables medical imaging devices to perform autonomous analysis, combining edge computing and AI models to achieve real-time local processing:

- AI Imaging Pre-Screening: AIoT-enabled CT, MRI, and X-ray machines can detect tumors, fractures, and abnormalities during image capture.

- Low-Latency AI Diagnosis: Traditional AI relies on cloud-based processing, but AIoT enables local AI computation on hospital servers or medical devices, improving diagnostic speed.

- Intelligent Data Sharing: AIoT interconnects medical devices, allowing hospital PACS systems to automatically archive, classify, and annotate images, enhancing doctors’ efficiency.

Case Study: A hospital deployed an AIoT imaging analysis system, achieving localized AI image processing, reducing misdiagnosis rates by 30%, and decreasing doctors’ image review time.

AIoT in Biopharmaceuticals: Smart Drug Development

Pharmaceutical research involves complex data and rigorous processes, including molecular modeling, clinical trials, and large-scale data analysis. AIoT plays a crucial role in pharmaceutical innovation:

- Smart AIoT Laboratories: AIoT sensors, combined with AI models, monitor real-time experimental data (e.g., protein structure changes), optimizing parameters and improving success rates.

- Automated Drug Synthesis: AIoT controls automated experimental equipment, adjusting formulations based on AI computations to enhance drug development efficiency.

- AI Processing of Biological Data: AIoT enables gene sequencing devices to process DNA fragments locally, increasing data processing speed.

Case Study: A biotech company leveraged AIoT sensors + high-performance computing clusters to accelerate cancer drug research, reducing development time by 40%.

3.2 AIoT in Precision Agriculture

Traditional farming relies on human experience, while AIoT integrates drones, smart sensors, and agricultural big data to automate and optimize farming operations:

- AIoT Soil Monitoring: Sensors measure moisture, pH, and nutrient levels, with AIoT calculating the optimal fertilization strategy.

- AI-Powered Drone Surveillance: Drones, using computer vision, analyze crop health and detect diseases for precision spraying.

- Automated Greenhouse Control: AIoT monitors temperature, humidity, and CO₂ levels, automatically adjusting irrigation, ventilation, and lighting for unmanned greenhouse management.

Case Study: A smart farm in the Netherlands utilized AIoT-enabled robotic crop monitoring, achieving automated harvesting and precision fertilization, reducing water usage by 25% and increasing yield by 30%.

3.3 AIoT in Smart Aquaculture

Modern aquaculture requires precise environmental control. AIoT enhances automation and intelligence in fish farming:

- AI Prediction of Fish Health: AIoT sensors monitor water temperature, oxygen levels, and pH, with AI automatically optimizing feed distribution.

- Intelligent Water Quality Management: AIoT and edge computing adjust water flow and oxygen levels, preventing mass fish mortality due to poor water quality.

- Fish Behavior Analysis: AIoT cameras monitor fish activity, detecting early signs of disease to reduce losses.

Case Study: A Norwegian aquaculture company deployed AIoT underwater cameras + sensors, automatically monitoring fish health, reducing farming risks by 15%, and increasing yield by 20%.

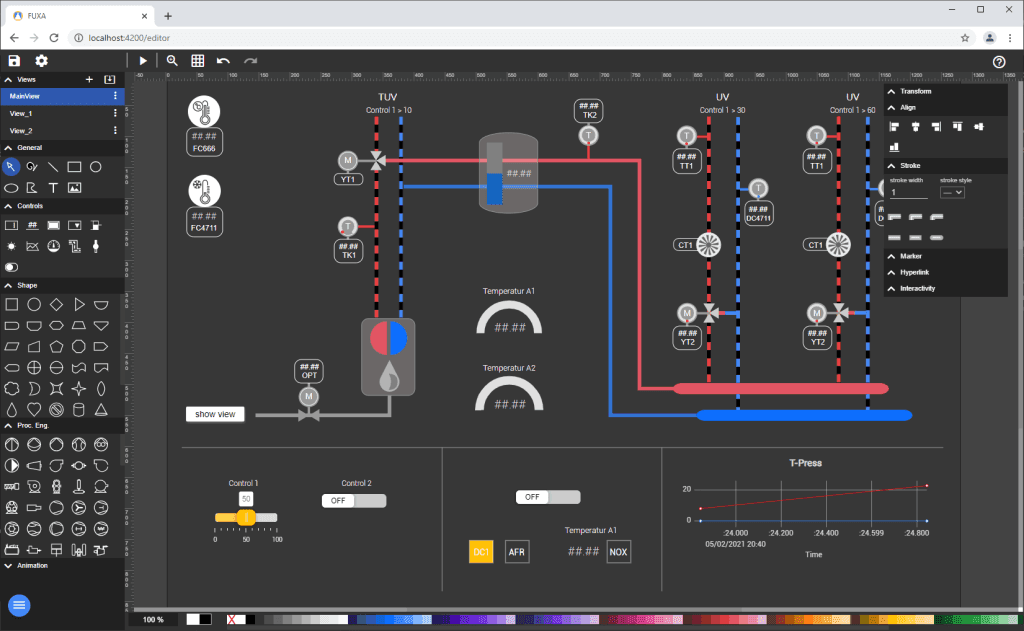

3.4 AIoT in Smart Grids

The energy industry is undergoing an AIoT transformation. Traditional power grids rely on manual adjustments, while AIoT enables automated grid optimization:

- AI Power Demand Prediction: Reduces electricity waste.

- Intelligent Load Balancing: AI optimizes wind and solar power distribution dynamically.

- Predictive Maintenance of Power Equipment: AIoT devices detect transformer failures in advance, minimizing downtime.

Case Study: A German energy company implemented an AIoT power grid monitoring system, enabling intelligent scheduling of wind and solar power, increasing green energy utilization by 15%.

3.5 AIoT in Smart Warehousing

The logistics industry is shifting from manual management to automated operations, with AIoT revolutionizing warehousing, transportation, distribution, and supply chain management.

Traditional warehouses rely on manual inventory tracking, fixed storage layouts, and human-based scheduling, but AIoT enhances logistics efficiency:

- Automated Goods Tracking: AIoT RFID tracking ensures full visibility of shipments, preventing losses and delays.

- Smart Shelf Management: AIoT integrates computer vision and deep learning to optimize storage locations and improve picking efficiency.

- AI-Powered Inventory Forecasting: AI predicts demand trends, dynamically adjusting stock levels to reduce overstock and shortages.

Case Study: Amazon deployed automated warehouse systems, leveraging robots + AI computations to optimize inventory placement, boosting picking efficiency by 40% and reducing labor costs by 30%.

3.6 AIoT in Smart Transportation

One of the biggest challenges in logistics is inefficient scheduling, high energy consumption, and non-optimized delivery routes. AIoT improves transportation efficiency through real-time data analysis, intelligent dispatch, and autonomous driving:

- AIoT Fleet Management: Uses GPS, sensors, and AI algorithms to optimize routing and reduce empty miles.

- Smart Cold Chain Logistics: AIoT continuously monitors temperature and humidity, automatically adjusting cooling systems to maintain ideal conditions for perishable goods.

- Autonomous Delivery Vehicles: AIoT powers self-driving trucks and drones, paving the way for fully automated logistics networks.

Case Study: FedEx deployed AIoT-based sensor networks, reducing logistics delays by 20% and cutting operating costs by 15%.

3.7. AIoT in Robotics

AIoT extends beyond data analytics to robotics, autonomous systems, and automated workflows.

As robotics advances, AIoT enables intelligent robots across multiple industries:

- Autonomous Inspection Robots: AIoT integrates computer vision + IoT sensors, allowing robots to autonomously inspect factories, warehouses, and airports.

- Smart Security Robots: AIoT-powered robots perform perimeter monitoring, facial recognition, and automated alerts, enhancing security.

- AIoT Industrial Robots: AIoT robots self-learn manufacturing processes, dynamically adjusting production parameters for improved flexibility.

Case Study: Boston Dynamics’ AIoT-enabled robot Spot, using 5G + computer vision, autonomously inspects factories, improving workplace safety.

3.8. AIoT in Automated Workflows

AIoT optimizes production, inspection, warehousing, and maintenance by enabling full automation:

- AIoT-Powered Quality Inspection: AIoT uses computer vision to detect product defects, enhancing quality control rates.

- Autonomous Equipment Maintenance: AIoT enables predictive maintenance, adjusting service schedules based on equipment performance, and reducing downtime.

Case Study: A German automaker deployed AIoT production line inspection systems, leveraging AIoT cameras and edge computing to detect defects, improving product qualification rates by 30%.

4. AIoT Technology in Extreme Environments

AIoT is not only applicable to commercial and industrial settings but also plays a vital role in aerospace, deep-sea exploration, and polar research.

4.1 AIoT in Space Exploration

Space is a challenging environment where real-time adjustments are difficult. AIoT enables autonomous adaptation in spacecraft:

- AIoT Satellite Networks: AIoT satellites possess self-navigation, coordinated communication, and data analysis capabilities, enhancing mission efficiency.

- Smart Mars Rovers: AIoT allows rovers to adjust tasks autonomously, reducing dependence on Earth-based control.

Case Study: NASA’s Perseverance Rover, equipped with AI vision, autonomously analyzes rocks and optimizes routes, improving mission success rates.

4.2 AIoT in Deep-Sea Exploration

Harsh ocean environments limit human intervention, making AIoT crucial for deep-sea research:

- AIoT Autonomous Submarines: Equipped with sensors and AI computing, these submarines automatically adjust routes and collect data efficiently.

- Marine Climate Monitoring: AIoT sensors deployed worldwide monitor ocean currents, temperatures, and ecosystems, providing accurate climate data.

Case Study: Japan developed an AIoT deep-sea exploration system integrating AI vision and autonomous navigation, improving data collection accuracy by 40%.

5. Final Thoughts: Why AIoT Matters for the Future

AIoT meaning is not just AI + IoT, but rather an autonomous intelligence system enabling devices to self-learn, optimize, and make independent decisions. AIoT is revolutionizing healthcare, agriculture, energy, logistics, robotics, and beyond, reshaping industry landscapes with self-adaptive intelligence.

Frequently Asked Questions (FAQ)

1. What is AIoT?

AIoT, or Artificial Intelligence of Things, combines AI’s intelligence with IoT’s connectivity to enable real-time decision-making, automation, and predictive insights.

2. What does AIoT mean?

AIoT refers to the integration of AI algorithms into IoT devices, allowing them to process data locally, self-learn, and make autonomous decisions.

3. How is AIoT different from AI + IoT?

While AI + IoT typically runs AI in the cloud, AIoT combines devices, edge computing, and cloud AI to deliver faster, more autonomous, and context-aware responses.

4. What are examples of AIoT?

Examples include AI-powered medical imaging, precision farming with smart drones, intelligent power grids, autonomous robots, and automated warehouses.

Recommended Reading

If you’re interested in learning more about AIoT and related technologies, check out these articles from our blog:

- AI and IoT: Understanding the Difference and Integration – A detailed comparison between AI + IoT and AIoT, with real-world integration examples.

- AI-Driven IoT: How Big Models are Shaping the Future of AI-Driven IoT – How AI-Driven IoT Differs from Traditional AIoT.

- AIoT Leads the Next Horizon of IoT: Bridging Embedded AI Development with IoT Innovation – Learn how Embedded AI optimizes smart city systems with real-time data processing and decision-making solutions.

- DeepSeek + AIoT Evolution Guide – How DeepSeek Makes IoT Smart Devices Smarter and More Efficient.