Uncover the Latest in AI and IoT

Highlight cutting-edge IoT technologies, AI advancements, and practical applications. Include open-source libraries and development tools to provide value to tech-savvy readers.

ZedIoT Blogs

Edge AI deployments rarely fail first on model accuracy. They fail when teams cannot see input health, inference health, version context, or diagnostic evidence. This article explains why observability should be designed as a core Edge AI capability from ESP32-class devices to Linux edge boxes.

Legacy industrial equipment projects usually fail when teams push PLCs, meters, and serial devices straight into the cloud without a stable edge boundary. This article outlines a safer brownfield-to-cloud path built around asset inventory, edge normalization, reliable uplink, and controlled write-back.

Edge AI fleets become hard to operate when firmware, model, and config are hidden behind one bundle version. This article explains how to separate those version planes so rollout, rollback, and troubleshooting stay controllable.

Global IoT deployments rarely fail because devices cannot connect. They fail because eSIM provisioning, device identity, regional policy, config versions, and operations feedback are not controlled as one lifecycle system. This article explains why lifecycle control matters more than connectivity alone.

Agentic IoT becomes fragile when A2A, MCP, OPC UA, and Modbus are treated as interchangeable layers. A more stable architecture uses A2A for agent coordination, MCP for controlled tool access, OPC UA for asset semantics, and Modbus for field execution.

Industrial edge gateways that only forward data usually lose control of buffering, replay order, duplicate writes, and acknowledgment recovery during weak network conditions. This article shows how a practical store-and-forward design should work.

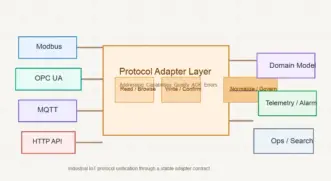

Industrial IoT platforms that treat Modbus, OPC UA, MQTT, and HTTP as isolated drivers usually lose control of semantic mapping, error handling, command confirmation, and data quality. This article outlines a safer protocol adapter layer design.

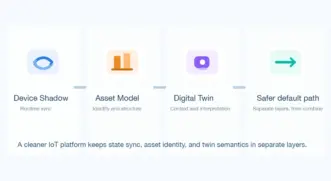

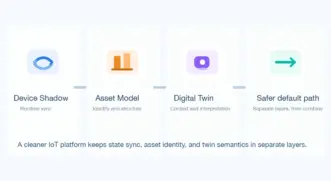

Many IoT platforms mix device shadow, digital twin, and asset model into one object and end up with bloated state models, weak search, and confused operations. This article explains what each one is for and how to stack them more cleanly.

Many IoT platforms mix device shadow, digital twin, and asset model into one object and end up with bloated state models, weak search, and confused operations. This article explains what each one is for and how to stack them more cleanly.

OPC UA, MQTT, and Modbus are not simple replacements for one another in industrial IoT. In many practical architectures, Modbus stays at device access, OPC UA unifies edge semantics, and MQTT carries northbound events and decoupled platform integration.

How much does a Tuya app cost? Learn OEM vs custom pricing, timelines, and when to move beyond OEM based on real IoT projects.

The hard part of Edge AI OTA is not pushing a new package. It is designing staged rollout, rollback, and remote recovery for devices whose firmware, model, and configuration evolve together. This article explains how to do it from ESP32 to RK3566.

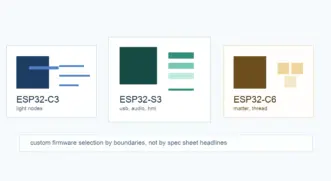

Choosing between ESP32-C3, ESP32-S3, and ESP32-C6 is less about which chip is newer and more about wireless roadmaps, USB and audio peripherals, runtime headroom, and long-term firmware complexity. This article gives a more practical selection path for custom firmware projects.

Building an ESP32 Modbus-RTU bridge is not mainly about connecting a MAX485 board. The real issues are RS485 electrical design, UART ownership, polling cadence, and the operational boundaries of ESPHome modbus_controller. This article explains how to bridge PLC data into ESPHome and Home Assistant more reliably.

Learn how to design a scalable Tuya enterprise integration with multi-tenant architecture. Cover tenant isolation, permissions, and IoT platform best practices.

Learn how to design Tuya DP (Data Points) for IoT devices. Understand DP structure, examples, and best practices for scalable Tuya IoT development.

Contact us and our experts will get back to you with more ideas.